Dancing with Robots

By Amy Laviers

Choreography brings new perspectives on encoding motion into machines.

Choreography brings new perspectives on encoding motion into machines.

I’m standing inside an open-faced shipping container on the waterfront of a harbor, participating in an experience that is part interactive art, part exploration of robotic movement, and part contemporary dance performance. I take a deep breath in, and my belly presses into a tightly wrapped sheath of fabric. In response to the shape change in my torso caused by this breath, a large series of mirrors, spanning beyond my reach in every direction, begin wiggling—they splash purple light in irregular, almost disco-like flashes, accompanied by the audible, regular hum of motors that create an even-keeled, robotic rhythm.

My own body’s natural movements wirelessly control these mirrored mechanical wings, which are physically affixed to the back of the shipping container. The dissonance between my independent but necessary breathing movement and my connection to a large, strange robot blurs the lines between conscious and unconscious control over a large-scale machine. A performer wears her own pair of robotic wings, also controlled by her breath, and together—with a blustery, Antarctic southerly wind blowing over us and our installation—we explore how movement carries information and how, when situated inside an artistic frame, machines can be more expressive.

Photo by Colin Edson for Kate Ladenheim and the RAD Lab

This interactive installation, titled Babyface, is a collaboration between The People Movers (a dance company) and the Robotics, Automation, and Dance (RAD) Lab at the University of Illinois at Urbana-Champaign. The installation was part of The Performance Arcade in Wellington, New Zealand, held in February 2020. The performer—a member of New Zealand–based Footnote Dance Company—interacts with participants who wear breath sensors that activates the large wings. Part of the allure of the installation is the sense of magical control created by coaching participants to notice temporal associations between machine actions and their own, even though they are not visibly connected to the machine.

Two tiny wireless devices connect the breath sensor strapped to my chest and the large pair of wall-mounted wings: a transmitter that generates and transmits radio waves to send signals, and a receiver that captures and processes the signals. These devices are enabled by the field of communication and are based on a model pioneered by the late American mathematician Claude Shannon, who is often referred to as the founder of information theory. Shannon established the notion of communication as the process of transmitting information from a source to a destination across a channel. This formal architecture for communication systems encoded and then decoded information in a systematic way, which defined not only how to efficiently represent data, but also the fundamentals for how data can be exchanged, and unified communication engineering.

This model applies to our understanding of communication more broadly, and we use it in robot design and in the development of human-robot interaction studies in my group. To embed information in robotic movement and capitalize on existing human conventions in movement interpretation, we need to understand how other engineered systems communicate successfully with humans. The standard ASCII coding system (American Standard Code for Information Interchange) encodes 128 letters, numbers, and symbols as distinct binary numbers. (In a binary coding system, “0” is a transistor in the “off” position and “1” is a transistor in the “on” position in the computer hardware.) ASCII is fine for transmitting text, but it doesn’t allow a programmer to use the increasingly common set of emoji characters because it is not rich enough, using only 1 byte of information to encode each letter. A byte is 8 bits, which corresponds to 8 transistors in memory in an on/off pattern. This representational constraint means that ASCII can encode only a finite number of symbols (256).

A common replacement for ASCII is the newer coding scheme Unicode, which uses up to 4 bytes (32 bits) in computer hardware to represent each symbol, allowing millions of characters to be encoded. This highlights an important relationship between expression and complexity, as well as between hardware and information: We need more hardware and a richer scheme of encoding and decoding to express more complex messages.

For the Babyface installation, I didn’t need a complex message to control the mirrored wings. I only needed to differentiate between two states, on and off. On the transmitter end, I associated states of breathing with numbers that were already coded in binary; on the receiver end, I decoded those states, associating them with states of machine activation (“turn the machine on” corresponded to 1 and “turn the machine off” corresponded to 0). This association made the programming much easier, as I could send a single byte of information coded in binary rather than a long string of bytes in a more complex, more expressive coding scheme. The structure of my desired signal changed the requisite encoding scheme and lowered the hardware requirements for my devices.

But how should robots, rather than transistors, embed information through motion? I wanted to push into new territory to begin to encode variable expression through motion. My group at the RAD Lab began searching for ways for robotic forms to more effectively act as expressive tools. That effort required collaboration between roboticists and choreographers.

Guided by Isaac Newton’s laws of motion, engineers are trained to measure the displacement, velocity, and forces apparent in motion. Notably, robots excel at very large (or very small) changes in these quantities, and therefore engineers use these machines to manufacture products at large and small scales that human hands cannot do on their own. By contrast, choreographers are trained to consider the multiplicity of possibilities in a single action executed by a human form—movement that is clearly rich with information. Translating that multiplicity into robot movement poses a significant challenge.

We need to think carefully about our hardware for moving—for encoding a message to another viewer.

Engineers often approach programming robotic movement in ways they consider to be efficient, such as by minimizing hardware complexity or by programming movement that fits within specific constraints. However, by better understanding the subtleties of human movement through dance, we can then better define how to encode movement.

When a performer extends his or her hand a given distance, they do so by carefully selecting from among many possible options. A rough, jerky extension toward another onstage player may indicate a sense of urgency or even aggression in the character, whereas a gentle, slow extension may communicate the character’s desire for a love interest. Skilled performers have many options when they choose how to extend their hand, and they work to create and refine even more options. Each individual artist creates his or her own encoding scheme. These artists have unique intellects that embed ideas, experiences, emotions, and other source material into their work—filling their movement with information.

Using the Laban/Bartenieff Movement System, a language for movement (see figure below), the RAD Lab describes and breaks down these complex, information- laden human motions into discrete pieces, which are used to program artificial movement. Rather than programming a specific type of robot with the steps to, for example, model clapping its hands—which is detail intensive—we can map the human movements involved in hand clapping as spatial commands. These high-level patterns of motion can then be used as a platform-invariant control system: Once the motion of clapping is encoded, it can be applied to various distinct systems. This top-down approach uses fewer commands to produce complex movements.

Barbara Aulicino adapted from materials provided by Umer Huzaifa

In conjunction with developing efficient ways of sending such signals, we need to think carefully about our hardware for moving. Using more-complex hardware enables us to encode a more complex message in motion. Just as we counted transistors for the wireless communication system to transmit symbols, we should count degrees of freedom for our mechanical systems through which we would now like to embed a signal. For example, I could use a single LED to communicate the text of Moby Dick, but instead I’ll likely choose a more expressive interface, such as an e-reader, to send this information to the viewer. Similarly, with robots, I could choose a single Roomba vacuum cleaner to encode an underlying motion signal, but I would likely be more successful if I used a humanoid robot.

Counting transistors is an established way of benchmarking computer processor capacity—it is the exact method used to measure what we call Moore’s law, which uses logarithmic plotting to show an explosion in the capacity of computers. Even though this method of counting overlooks many of the critical, dynamic elements of computer function, such as processor speed, it is a useful benchmark. When we apply a similar counting to the external components of robots so as to quantify how complex a message they might instantaneously encode, we see that roboticists have not been thinking like dancers, in that they were not striving to create more and more useful tools for expressing ideas or information. Instead, the external complexities of robots have been more or less stagnant over the past 15 years, even as their internal complexities have greatly increased, along with their capacity for representing patterns, as a result of the progress made in computing.

Due to the simplicity of their current hardware, robots cannot precisely replicate human movement. However, in our study of the Laban/Bartenieff-based method, we found that more effective imitation could be accomplished in robots by providing human designers with a new, choreography-inspired tool. When participants in the study viewed robotic movements derived from our method, they judged the robotic movements to be the same as the movements performed by the human designer. Thus, imitation of the human by the robot was accomplished from a perceptual point of view. Although the complexity of the message that could be sent through movement was limited by the hardware, using human movement analysis to encode the message resulted in successful decoding of the message by the viewer.

When we consider the amount of information that can be transmitted with a given moving body, we have several reasons to believe that humans and other animals outpace their artificial counterparts. The Babyface installation compares the action of a single pair of wings (worn by the performer) with the action of many fragmented “mechanical feathers” (controlled by the participant) to explore the feeling of greater complexity in motion.

Part of this complexity is the ability of natural systems to adapt to variables in their environments. Traditionally, robotic movements are optimized with respect to their physical constraints, so that the robot performs in the most efficient way. To program a robot to, for example, lift its arm and move it as if to wave hello, a set collection of movements is encoded. Traditionally, the trajectory of the arm would be selected based on optimizing the dynamics of the physical system. Roboticists would then enumerate a cost to minimize, such as a tally of how much energy the movement uses. This approach is effective from the point of view of using the least force possible to execute the “wave,” but when we view the robot as an information source, we recognize the critical nature of the multiplicity of choices in motion. In the RAD Lab, instead of measuring energetic costs, we have been exploring how many patterns of movement can achieve this desired meaning—more specifically, how many different ways this robot can move that will be recognized as a wave. (The video below summarizes how we read, translate, and interpret motion in our lab.)

Dancers study movement and taxonomies to help them name and catalog their design space. Through their training, to return to information theory, we may say that they are refining not only their encoding scheme but also their decoding scheme, adding more detail and nuance to their ability to sort through noise for signal. In our research, we ask: How many ways can a human walk? The question that follows is then: How many ways can a robot walk?

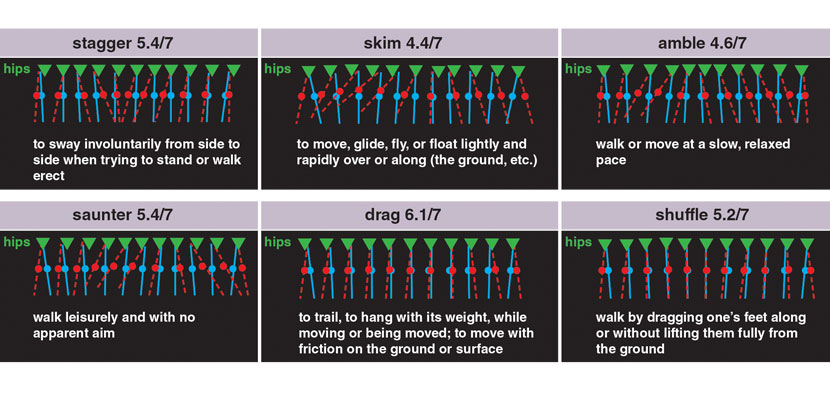

Collaborating with Cat Maguire of the Laban/Bartenieff Institute of Movement Studies, our group validated six styles of walking gaits that human users reliably match to various English terms for walking. We wanted to explore new ways of generating various modes of gait for an artificial system. We took a rather shocking approach to our experiment: Instead of optimizing toward a particular objective, such as energy conservation, we entered variable terms in our constraints that changed features of the gait to produce variation. Essentially, we are using math to say, “We’ll take any gait that satisfies the physics of our system and desired design parameters.” The benefit of this approach was that we obtained hundreds of valid gaits, and now we can explore labeling them for human viewers across many contexts.

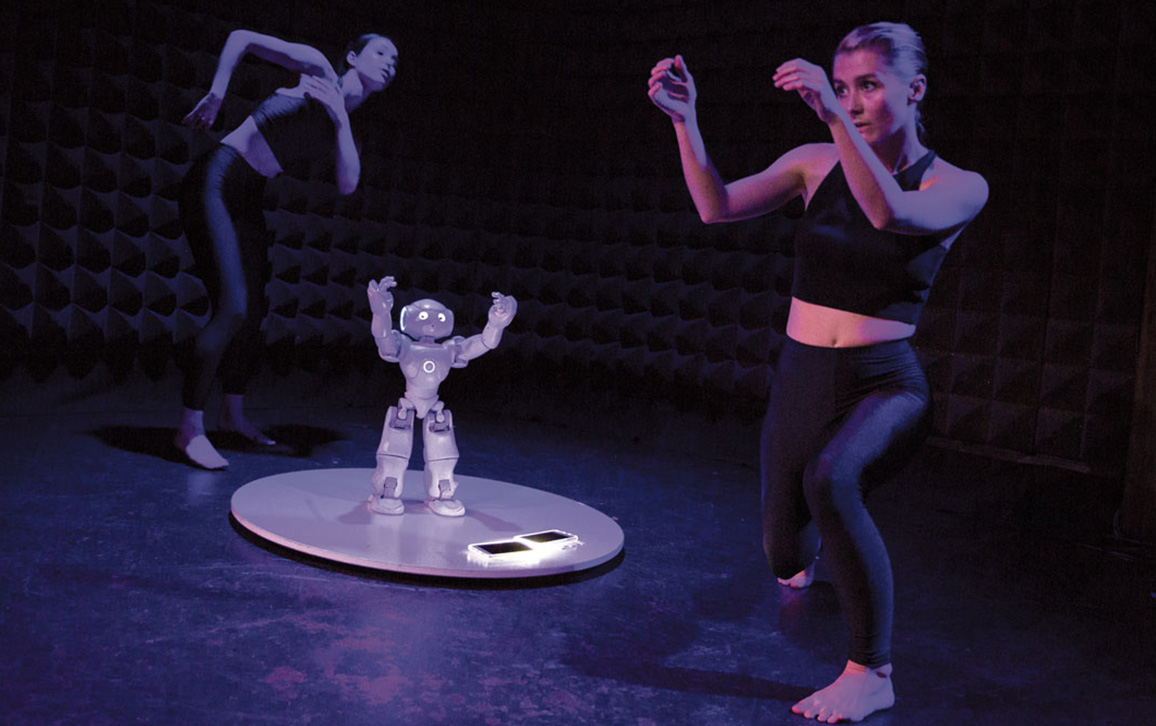

Photo by Yi-Chun Wu of “Trio,” an excerpt of Time to Compile, for the Dance NOW Festival.

In developing the procedure we used for labeling these variable gaits, we were led by Shannon’s foundational work and by choreographic practice to deviate from typical research practice. We looked for emotionally neutral labels, such as “skim” and “shuffle,” which do not assume as much of a particular decoding scheme on the part of the viewer and are consistent with how dancers describe motion.

Removing emotional evaluation of the motion by a particular viewer from the labels allows us to create less-biased design tools, which in turn allows robots to deliver the intended meaning across multiple presentations. Communication occurs within a specific context or environment. In the previous example, the wave action may not always mean “hello.” Consider the same action with the robot’s arm now pressed against a pane of glass: The waving action now becomes a washing action. Looking at the movement from only a physical description perspective, it’s easier to determine where and how the wave begins to look like “hello” rather than “window washing.” This example shows how the specifics of movement alone do not create meaning in motion. Crafting context and considering the culture, training, and expectations of the viewer (processes in which artists are experts) ensures that the signal can be heard through the noise.

Machines cannot create meaning on their own. In my group’s research, as in our daily lives, we must integrate situational context, environmental context, physical form, movement design, viewer preparation, cultural conventions, and human expectation to create that meaning. Our collaborations are ultimately about supporting harmony between human and machine—the kind of harmony that could improve the way we interact with machines in our factories, hospitals, schools, and homes in the future.

Click "American Scientist" to access home page

American Scientist Comments and Discussion

To discuss our articles or comment on them, please share them and tag American Scientist on social media platforms. Here are links to our profiles on Twitter, Facebook, and LinkedIn.

If we re-share your post, we will moderate comments/discussion following our comments policy.